|

12/25/2023 0 Comments Athena json query

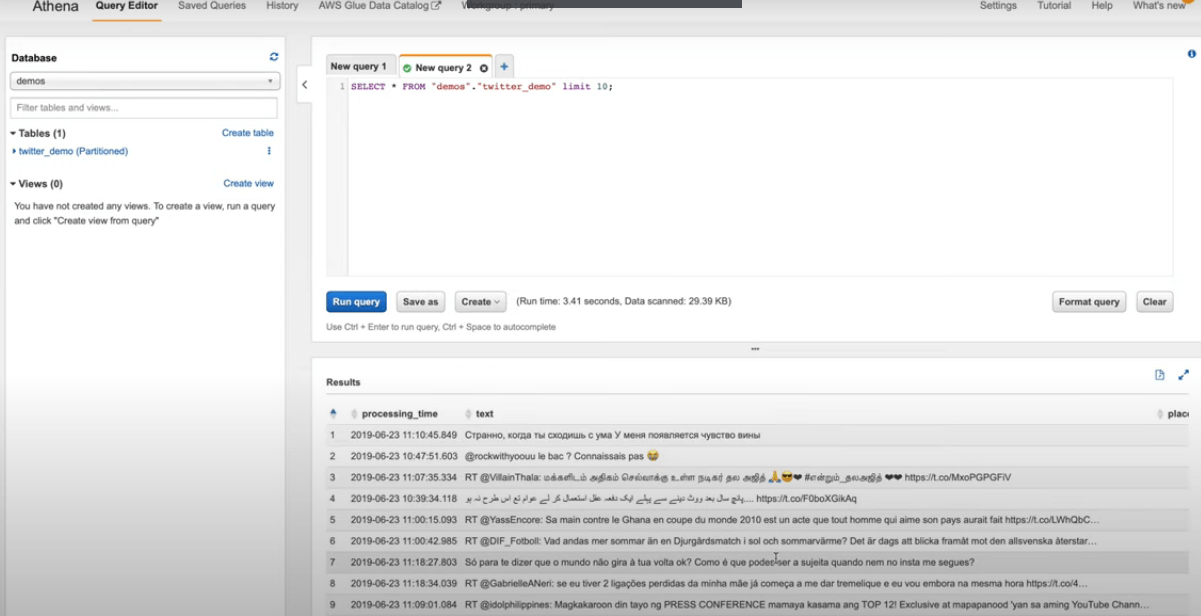

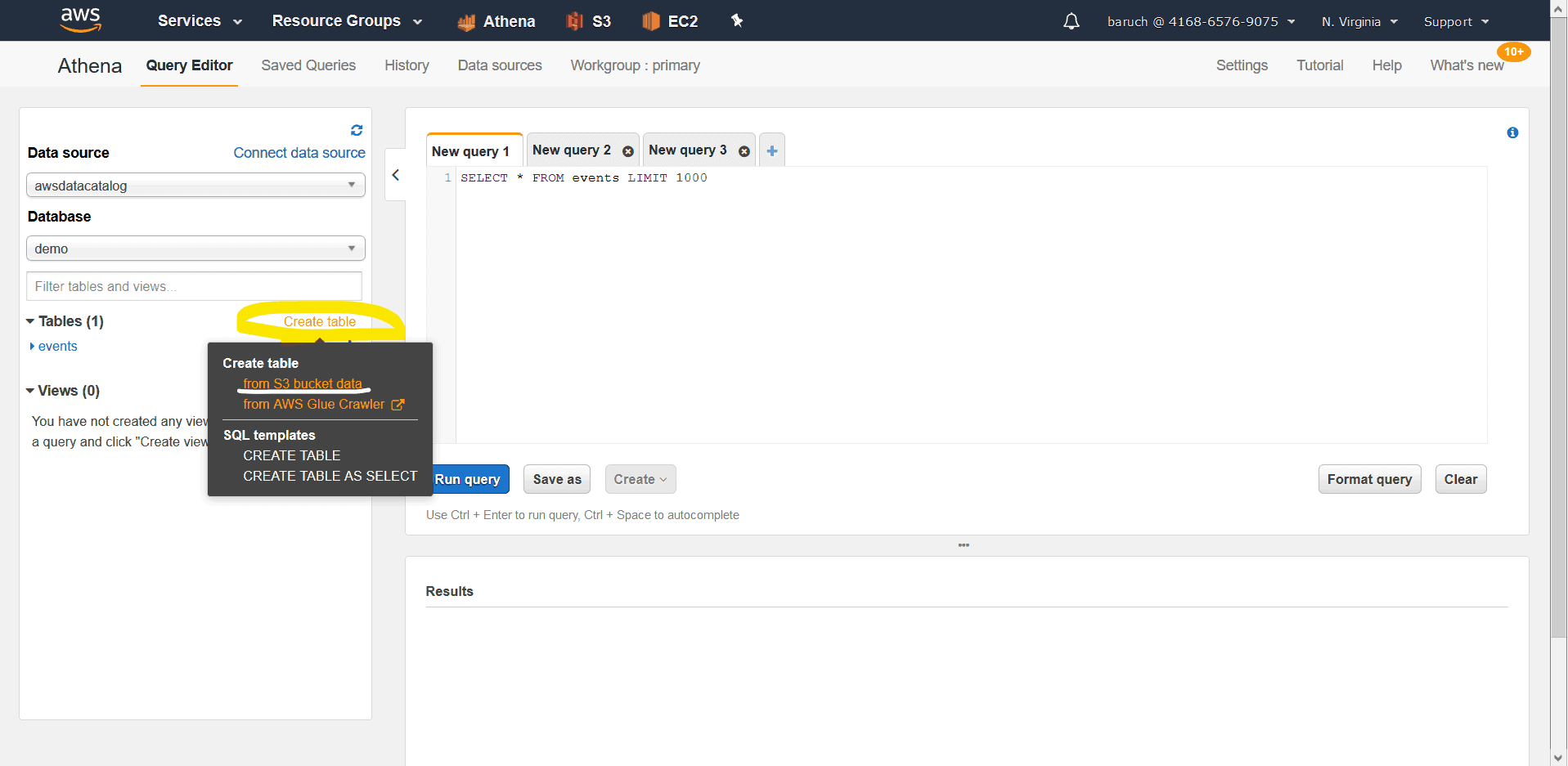

After running the crawler, a table gets created which stores all the metadata about the JSON file for querying purpose.Īt this point we could actually query the data, but best practice is to run an ETL (Extract, Transform & Load), to transform the data so it is more efficient to query later on. This will parse all the files from S3 bucket. Next create a crawler using the classifier, and select input as the JSON data from stored S3 bucket path. Since in our use case we are using JSON data set, we can use JSON custom classifier ( regex) where we can mention a JSON path expression which can be used to define a JSON structure and table schema. Athena uses these tables for querying.įor proper grouping of Glue metadata tables, create customized classifiers based on different data types such as ‘JSON’. The crawler will traverse the specified S3 files and group the things into tables. First it provides data crawlers that use inbuilt and/or custom classifiers to try and parse the JSON data. This is where Glue comes into the picture. Now we have the JSON data, we need to structure it enough that Athena can query it. This data is in JSON format which needs to be stored in S3 bucket as mentioned in the above diagram. In this use case, we can use the claims data of medical insurance company or vehicle contracts. We can use Amazon S3 for data storage, data transformation (ETL) using Glue and then data visualization (Analytics) via Athena & QuickSight.īelow diagram represents the workflow of usage of these AWS services.įirst we need to generate our data set. In order to fulfill this end to end requirement usage of AWS services is the best option. The problem here is to handle such a large dataset and generate complex reporting by doing data transformation. Following is the schema to read orders data file.Process raw claims data (medical insurance or vehicle contracts related data) which is a large dataset and generate reporting visuals with the help of processed data. Step 3: Create Athena Table Structure for nested json along with the location of data stored in S3Īthena has good inbuilt support to read these kind of nested jsons. Once database got created, create a table which is going to read our json file in s3.Create database in athena with following query like traditional sql query.It will directory query the file at run time and provide the result. Athena looks like a relational table structure but it will not store any data. This step needs to be careful while creating Athena structure for the provided data file. Following is the screenshot of file uploaded to s3. Once data file is ready you can import to any S3 bucket using s3 upload file option from AWS Console. Its converted to this Flat structure Step 1: Upload File To S3 Note : Athena reads the file in the following format only. The following is the nested json structure which can exhibit data of order and orderliness. In this article we will first take some sample nested JSON data structure which we will transform to flat structure. Athena will automatically scale up the required CPU to process it without any human intervention. Athena is the most powerful tool that can scan millions of nested documents on S3 and transform it to flat structure if needed. This Article shows how to import a nested json like order and order details in to a flat table using AWS Athena. Frontend Development using ReactJS and NextJS Advanced UIs with ReactJS and NextJS.Content Management Solutions Expert CMS solutions Headless or Traditional.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed